|

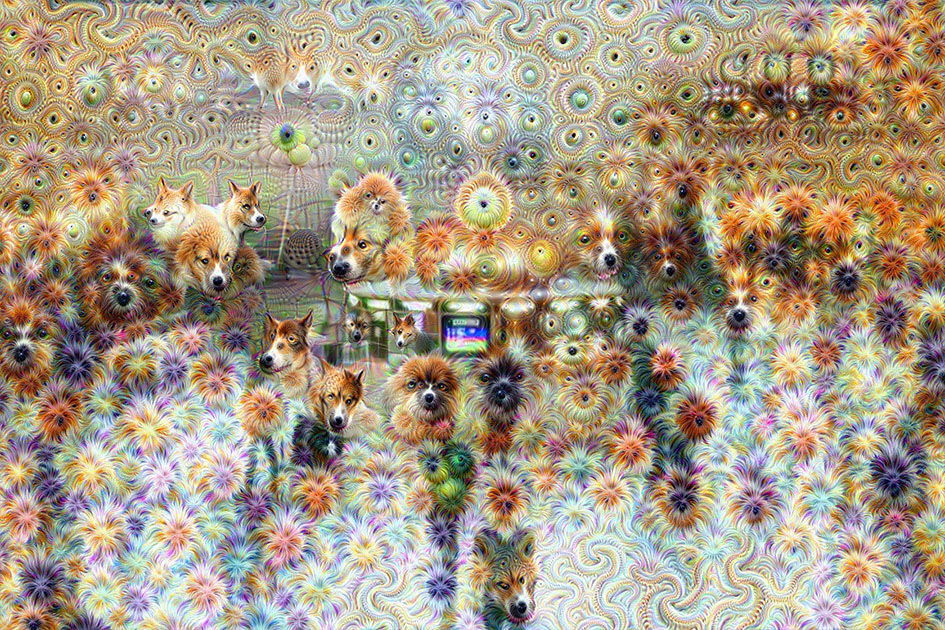

What DeepDream does is computationally intensive yet incredibly primitive next to the feats of the brain-in essence, it’s finding bits of a picture that vaguely resemble its archetypes of eyes and birds.Raphaël Bastide, Handmade Deep Dream (2015). Art historian Barbara Maria Stafford calls analogy “a metamorphic and metaphoric practice for weaving discordant particulars into a partial concordance,” the ability to understand that something can be like another thing without being identical to it. The importance of DeepDream does not lie in the resultant images, but in the computer’s grasp of similarity, the analogical process that lies at the heart of creativity, as well as its trained and stochastic nature, a far cry from the deterministic algorithms that form the fundament of computer science. Quoting Walter Benjamin on the emptiness of kitsch is as mechanical and predictable a move as DeepDream’s analysis, evidence that we often do no better than a computer when it comes to originality and creativity. Yet in saying “the computer is undergoing something that humans experience during hallucinations,” he makes the mistake of imposing a clichéd pattern on a very different phenomenon-in other words, doing exactly the thing that DeepDream does. The anti-computational backlash against DeepDream is predictable: Pacific Standard’s Kyle Chayka deems it kitsch. Their inner workings do not appear “rational,” nor are they algorithmic.

And indeed, the most impressive accomplishments in artificial intelligence today are coming from networks that are increasingly opaque in their mechanism. You had to settle for programs that were mostly approximately working, not perfect. This is, in fact, why I preferred to avoid neural networks and other forms of machine learning in my own programming: If something went wrong, it was almost impossible to fix it without breaking something else. The steps here created the neural net itself, but once trained, it has become a system of unpredictable analysis and feedback, only loosely under our control.

The result is no longer algorithmic because an algorithm specifies concrete steps to accomplish a task. At the end of the training, the network performs far better than it did at the start-which is why it is called “learning.”īy the time you get to the enormous deep learning architectures that Google (where I used to work, and where my wife still does) and others are creating, you have neural networks with 20-plus layers that are capable of achieving remarkable feats of “learning,” such as image recognition, but you have another phenomenon as well: The network’s functioning has become fairly opaque and its behavior has become emergent. The predominance of certain skin tones, the presence of certain shapes, the absence of other features-all of these lend greater or lesser weight to the neural net’s ultimate decision.

This is very much an estimate, one based on a great number of interdependent probabilities. Based on the training set, the neural network iteratively fine-tunes a large internal set of probabilities and conditions, using it to make a best guess as to whether an unspecified image is pornographic or not. Programmers feed the network a “training set” of porn images and nonporn images, each classified as such by hand. For example, take image recognition of porn images, used to classify whether an image should be filtered from an all-ages search. Neural nets are one tool of machine learning, the field of computer science focused on building trainable systems for pattern recognition and predictive modeling-which, likewise, doesn’t have much to do with human learning. Most write-ups of DeepDream have stumbled over the very concept of a neural net, which doesn’t actually have all that much to do with biological neurons.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed